In the novel A Brief History of Intelligence by Max S Bennet; Max tells about the Catastrophic forgetting in AI!

Catastrophic forgetting in AI is the abrupt, drastic loss of previously learned information when a neural network is trained on new, subsequent data. It happens because new optimizations overwrite parameters crucial for older tasks, causing severe performance degradation in sequential learning scenarios!

Yeah; that ‘Official’ definition went over my head too which made me forget so many things!

Listening to the novel though you get to know what is cooking!

This is the issue; A machine was ‘trained’ for addition which is say adding one to each number! It did that perfectly! Later it was ‘trained’ for addition of another number! It did that also perfectly! You would think now that I am a ‘perfect’ idiot!

But (a blog without a but is not a blog!); what the researchers observed is that the machine now forgot the addition which it had learnt before! This was because it ‘learnt’ the new task by writing the ‘data’ over the old task!

It is like a kid forgetting the 3 table after he or she learns the 4 table!

This is actually a major problem in the AI system!

Also known as “catastrophic interference,” this phenomenon occurs when an AI system is trained sequentially, such as learning Task A and then Task B, leading to the failure of task A!

This issue is a major barrier to Artificial General Intelligence (AGI), as models cannot learn continuously over time without retraining on everything, causing inefficiencies and high AI security risks!

Practically speaking you can understand that every AI which is ‘released’ can only get better with results but it cannot practically ‘learn’ anything new! Like if the new version of Chat GPT is released in 2026; although it can tell you new stuff, it is practically stuck forever in 2026!

Researchers are exploring several strategies to overcome this issue and this is how the book comes into play!

The solutions as mostly based on how the HUMAN BRAIN mitigates Catastrophic forgetting! Brain usually does not forget old memories when new memories are formed! Which is why the average human brain’s ability to consolidate memory is the solution to Catastrophic forgetting!

Some techniques are Elastic Weight Consolidation or the Replay Buffers.

Some other approaches beyond the scope of my brain and this blog includes methods that involve adding new, specialized neurons for new tasks, rather than updating existing ones!

One interesting approach proposed by Google is the Nested Learning. This approach treats AI as a system of smaller, nested optimization problems with different learning speeds, mimicking biological memory! Like short and long term memory done by our brain!

In the end, the perfect solution is the HUMAN BRAIN!

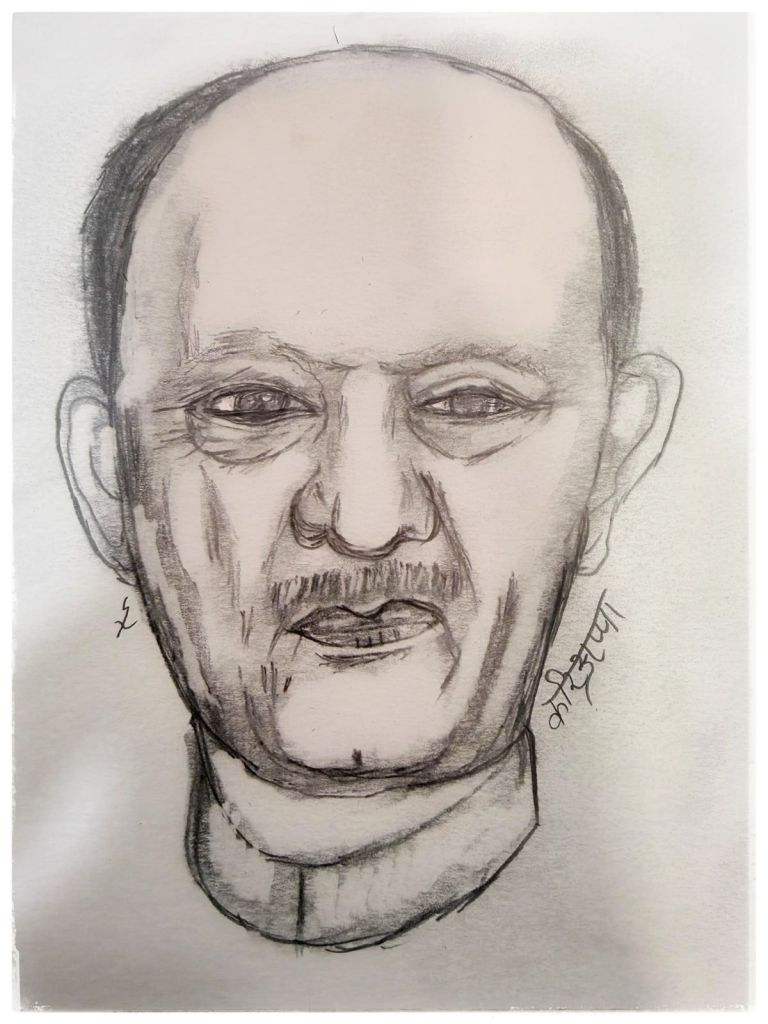

Which is why the average Indian Human Brain will never forget the contributions of Field Marshal Kodandera Madappa Cariappa!

Now give some well deserved break to the soft Hard Drive of your body aka Sleep!

Shubh ratri!